TPAMI (IEEE Transactions on Pattern Analysis and Machine Intelligence) is a leading journal in the fields of computer vision and pattern recognition, sponsored by IEEE.Among the four A-level journals in artificial intelligence recognized by the China Computer Federation, it ranks first.According to the latest statistics, TPAMI has an impact factor of 18.6, placing itamong the top Q1 journals incomputer science and artificial intelligence, with a CiteScore of approximately 35 and an h5-index of 217. Since its founding in 1978, the journal has consistently driven advances in pattern analysis, machine learning, and computer vision, publishing numerous seminal workswitha profound impact on global AI research and applications.

The acceptance of Professor Lu Yu’s latest research byTPAMImarks another significant milestone for our faculty and research team, highlighting a new and important breakthrough in the fields of artificial intelligence and computer vision.

Paper Title:Complementary Text-Guided Attention for Zero-Shot Adversarial Robustness

Vision-language models are transforming the way machines perceive and understand the world. Models such as CLIP, with their powerful zero-shot capabilities, have been widely applied across multiple domains. However, when confronted with carefully crafted adversarial perturbations, these models can produce incorrect predictions, limiting their reliability in real-world applications. Systematically understanding the potential risks posed by adversarial attacks and developing effective mitigation strategies is therefore critical to ensuring the trustworthiness and robustness of AI systems.

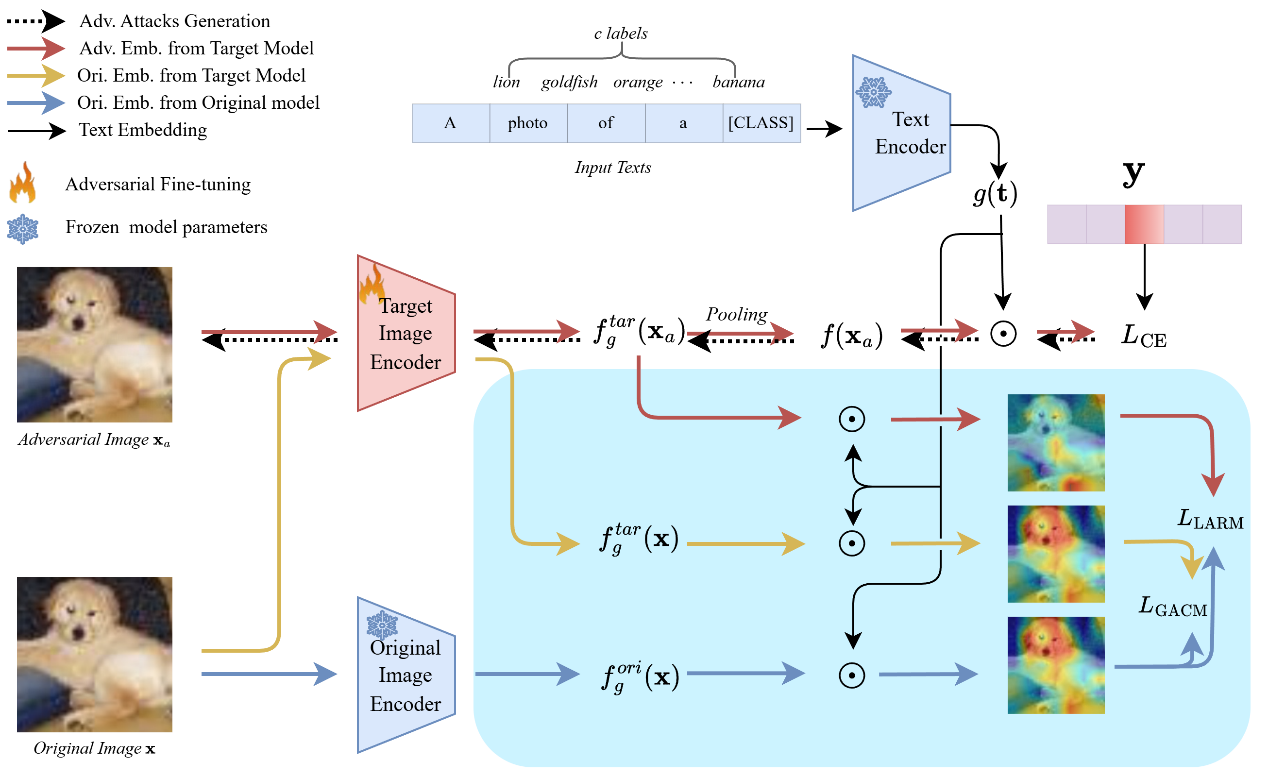

Addressing this cutting-edge problem, the paper uncovers a key phenomenon: adversarial perturbations not only alter image pixels but also significantly disrupt the internal text-guided attention distribution, causing structural shifts in the regions the model focuses on. Building on this finding, the paper proposes TGA-ZSR, which enhances zero-shot adversarial robustness from the perspective of attention alignment without compromising the model’s original generalization ability.Further investigation revealed that foreground attention guided by single-class prompts may incorrectly attend to irrelevant regions in complex scenes, undermining model robustness. To address this, the paper introduces Complementary Text-Guided Attention (Comp-TGA), which integrates foreground and background attention guided by both class and non-class prompts, enabling more precise localization of target regions. Experimental results demonstrate that both methods achieve significant improvements in robustness across 16 benchmark datasets, further highlighting the pivotal role of attention mechanisms in improving model robustness.

The first author of this paper isProfessor Lu Yu from the School of Computer Science and Engineering, Tianjin University of Technology. The work was completed with the participation of first-year PhD student Haiyang Zhang and under the guidance of Professor Changsheng Xu.